How to use async functions with Array.reduce in Javascript

How to use Promises with reduce and how to choose between serial and parallel processing

In the first article, we've covered how async/await helps with async commands but it offers little

help when it comes to asynchronously processing collections. In this post, we'll look into the reduce function, which is the most

versatile collection function as it can emulate all the other ones.

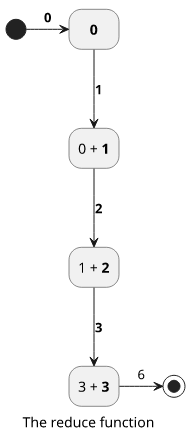

The reduce function

Reduce iteratively constructs a value and returns it, which is not necessarily a collection. That's where the name comes from, as it reduces a collection to a value.

The iteratee function gets the previous result, called memo in the examples below, and the current value, e.

The following function sums the elements, starting with 0 (the second argument of reduce):

const arr = [1, 2, 3];

const syncRes = arr.reduce((memo, e) => {

return memo + e;

}, 0);

console.log(syncRes);

// 6memo |

e |

result |

|---|---|---|

| 0 (initial) | 1 | 1 |

| 1 | 2 | 3 |

| 3 | 3 | (end result) 6 |

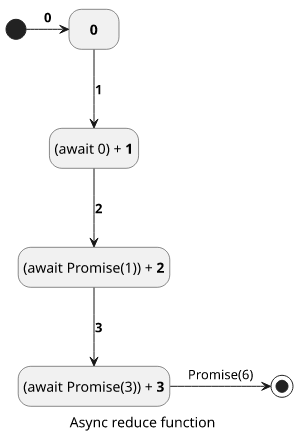

Asynchronous reduce

An async version is almost the same, but it returns a Promise on each iteration, so memo will be the Promise of the previous result. The iteratee function

needs to await it in order to calculate the next result:

// utility function for sleeping

const sleep = (n) => new Promise((res) => setTimeout(res, n));

const arr = [1, 2, 3];

const asyncRes = await arr.reduce(async (memo, e) => {

await sleep(10);

return (await memo) + e;

}, 0);

console.log(asyncRes);

// 6memo |

e |

result |

|---|---|---|

| 0 (initial) | 1 | Promise(1) |

| Promise(1) | 2 | Promise(3) |

| Promise(3) | 3 | (end result) Promise(6) |

With the structure of async (memo, e) => await memo, the reduce can handle any async functions and it can be awaited.

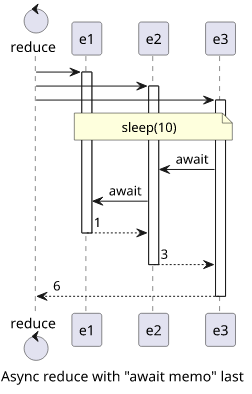

Timing

Concurrency has an interesting property when it comes to reduce. In the synchronous version, elements are processed one-by-one, which is not surprising

as they rely on the previous result. But when an async reduce is run, all the iteratee functions start running in parallel and wait for the

previous result (await memo) only when needed.

await memo last

In the example above, all the sleeps happen in parallel, as the await memo, which makes the function to wait for the previous one to finish, comes later.

const arr = [1, 2, 3];

const startTime = new Date().getTime();

const asyncRes = await arr.reduce(async (memo, e) => {

await sleep(10);

return (await memo) + e;

}, 0);

console.log(`Took ${new Date().getTime() - startTime} ms`);

// Took 11-13 ms

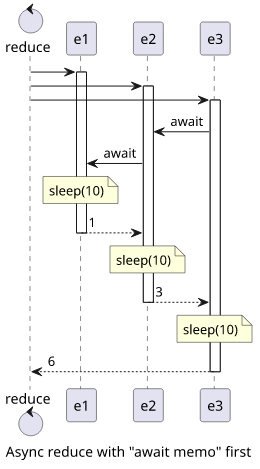

await memo first

But when the await memo comes first, the functions run sequentially:

const arr = [1, 2, 3];

const startTime = new Date().getTime();

const asyncRes = await arr.reduce(async (memo, e) => {

await memo;

await sleep(10);

return (await memo) + e;

}, 0);

console.log(`Took ${new Date().getTime() - startTime} ms`);

// Took 36-38 ms

This behavior is usually not a problem as it naturally means everything that is not dependent on the previous result will be calculated immediately, and only the dependent parts are waiting for the previous value.

When parallelism matters

But in some cases, it might be unfeasible to do something ahead of time.

For example, I had a piece of code that prints different PDFs and concatenates them into one single file using the pdf-lib library.

This implementation runs the resource-intensive printPDF function in parallel:

const result = await printingPages.reduce(async (memo, page) => {

const pdf = await PDFDocument.load(await printPDF(page));

const pdfDoc = await memo;

(await pdfDoc.copyPages(pdf, pdf.getPageIndices()))

.forEach((page) => pdfDoc.addPage(page));

return pdfDoc;

}, PDFDocument.create());I noticed that when I have many pages to print, it would consume too much memory and slow down the overall process.

A simple change made the printPDF calls wait for the previous one to finish:

const result = await printingPages.reduce(async (memo, page) => {

const pdfDoc = await memo;

const pdf = await PDFDocument.load(await printPDF(page));

(await pdfDoc.copyPages(pdf, pdf.getPageIndices()))

.forEach((page) => pdfDoc.addPage(page));

return pdfDoc;

}, PDFDocument.create());Conclusion

The reduce function is easy to convert into an async function, but parallelism can be tricky to figure out. Fortunately, it rarely breaks anything,

but in some resource-intensive or rate-limited operations knowing how the functions are called is essential.

An example is available on GistRun

An example is available on GistRun