Do not use fs sync methods in Javascript, use fs.promises instead

Synchronous functions don't play well with Javascipt's single thread. Fortunately, there is an alternative

Why sync execution is bad

NodeJs's fs module provides a long list of ...Sync functions, such as fs.readFileSync, fs.rmSync,

fs.writeFileSync. These interface with the filesystem and return synchronously when the operation is finished.

The easiest example is to read a file:

const contents = fs.readFileSync("file.txt");The contents value will be the contents of file.txt.

If you run this code with a file that actually exists you won't notice anything wrong with this call. The program executes instantly, and even if you read several files in succession it won't slow down the execution.

So, what's wrong with this?

While it doesn't seem like it, accessing the filesystem is slow. Sure, if "fast" means "in a blink of an eye" then disk access is usually fast. But processors work on the nanoseconds (10^-9) scale, and SSD access takes several microseconds. Of course, there are many layers between Javascript code and the CPU, but even in the best of cases it's thousands of times slower than executing code.

And it's not just about speed but variablity of speed. A filesystem can be attached via a network connection and that bumps up the access time by orders of magnitude. Or it can even be a browser tab mounted as a file, and accessing that might take seconds or more.

Because of this, you can't assume that filesystem access is fast, even when it is indistinguishable from fast things.

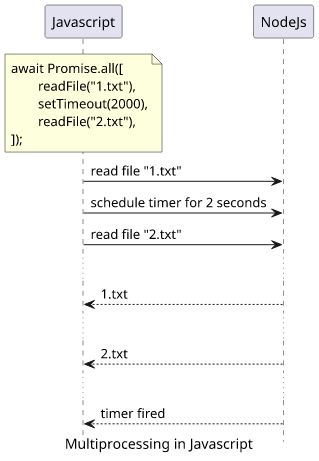

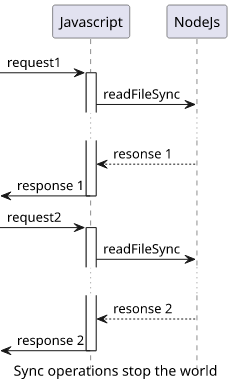

Combine this slow operation with Javascript's single-threadedness and you'll see why sync functions are bad. While async/await and callbacks do a good job of hiding that there is only a single execution thread that does everything, they do not make the language capable of true multiprocessing. The only way to make multiple HTTP requests, read multiple files, or even just scheduling multiple timers at the same time is to rely on NodeJs to do that and provide a callback that it will invoke.

Sync methods don't play well in this structure. Since they stop the single thread, nothing else can happen until they are finished.

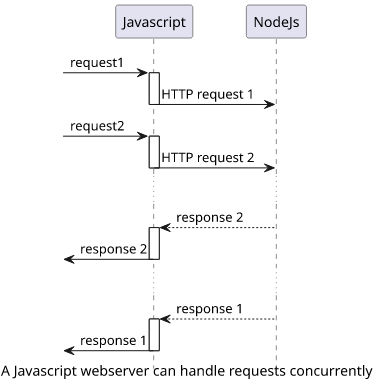

Imagine you have a webserver that users call and that handling a request consists of mostly sending database calls. This setup scales well, as most of the work is done by NodeJs in a parallelized way.

But when the response needs to read a file and that is implemented synchronously, it introduces a "stop the world" construct.

Testing slow I/O

But testing how slow files affect the program execution has a problem: files are usually fast ("in a blink of an eye"). So how to actually see the difference

between a sync and an async readFile?

Files are way more flexible than just bytes written to a disk. You can create a FIFO that provides a pipe a process can write into and another process can read from. And from the point of NodeJs they behave like regular files.

To create a FIFO, use mkfifo:

mkfifo p1

mkfifo p2

mkfifo p3To write some data into them, use an echo with a redirection:

echo "data" > p1If a process is reading this file it will get this text.

Sync reading

Let's consider this code:

const fs = require("fs");

["p1", "p2", "p3"].map(async (f) => {

console.log(`Reading ${f}`);

fs.readFileSync(f);

console.log(`Reading ${f} finished`);

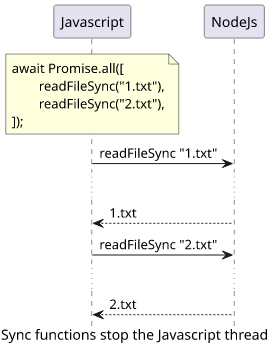

});On first sight, you might think the three files (p1, p2, and p3) are read in parallel. There is an async map that creates a Promise for each

element, then all three reads a file.

But running this code shows that it behaves synchronously. It starts reading the first file, then stops until it's finished. Then it starts reading the second, waits for it, and so on.

Reading p1

$ echo "p" > p1

Reading p1 finished

Reading p2

$ echo "p" > p2

Reading p2 finished

Reading p3

$ echo "p" > p3

Reading p3 finishedThis shows how a readFileSync stops the world for Javascript. It affects seemingly unrelated things, such as Promises running in parallel, timers,

callbacks, and network requests.

fs.promises

Fortunately, there is a better alternative. Recent NodeJs versions offer promisified versions of fs functions and these can be directly inserted into an

async flow.

const contents = fs.promises.readFile("...");Since these functions don't rely on stopping the world but are just an abstraction over the callback-based fs functions, they don't affect the scalability of the

program. And with async/await, the code is almost the same:

const fs = require("fs");

["p1", "p2", "p3"].map(async (f) => {

console.log(`Reading ${f}`);

await fs.promises.readFile(f);

console.log(`Reading ${f} finished`);

});Running this shows that the three files are read independently:

Reading p1

Reading p2

Reading p3

$ echo "p" > p1

Reading p1 finished

$ echo "p" > p3

Reading p3 finished

$ echo "p" > p2

Reading p2 finishedConclusion

Potentially long-running synchronous functions in Javascript are relics from the early days of the language. Sync HTTP

requests, sync

exec, and fs.*Sync were useful when writing async code usually

ended up in callback hell. But now, with async/await, they are just pitfalls that are easily avoidable yet not easy to detect.