Reuse code with domain-specific steps in collection pipelines

How being mindful of collection processing promotes code reuse

Why extend pipelines

So far, we've only looked into how to add new functions to collection pipelines. In this post, we'll look into why such extensions might be necessary.

We've used a rather simple head function as an example, which raises the obvious question: What if a library

provides all the fundamental steps? In that case, extending the pipeline with new functions is a non-issue, and all the problems

we've talked about previously are non-existent.

If you only want to use generic, building-block type functions, this might be true. In that case, choose a library that provides all the functions you need.

But for complex apps, functions that are specific to the domain might be needed to promote code reuse. In that case, no library can provide them out of the box.

This post argues that even though libraries can provide all the necessary building blocks for collection processing, being conscious about extending the pipelines promotes code reuse and thus a cleaner architecture.

Domain-specific steps

In simple cases, moving the iteratee to a separate function is enough.

As an example, filter an array of users by some non-trivial condition (Try it):

const users = [{name: "user1", active: false, score: 50}, ...];

const filtered = users.filter((user) => {

return user.active && user.score >= 50;

});If you need this filtering in many places, refactor it to a function (Try it):

const byActiveAndPresent = (user) => {

return user.active && user.score >= 50;

}

const usernames = users

.filter(byActiveAndPresent)

.map((user) => user.name);

const scores = users

.filter(byActiveAndPresent)

.map((user) => user.score);Extending the pipeline

The problem is when the change itself does not fit into a filter, map, or the other functions that are provided

by the pipeline.

For example, filter the non-active users and also assign an activity rating to the objects. This operation is a filter,

followed by a map, so it does not fit into either of them (Try it):

const addActivityScore = (user) => {

...

};

const processedUsers = users

.filter(byActiveAndPresent)

.map(addActivityScore);Moving this to a separate function is usually done with a ([users]) => [users] signature (Try it):

const processUsers = (users) => {

return users

.filter(byActiveAndPresent)

.map(addActivityScore);

}But in this case, this function does no longer fit into the pipeline:

const activityScores =

processUsers(users)

.map((user) => user.activity);Instead of a flat structure, we're back to square one.

Using functional composition

Extending Arrays is an anti-pattern, as we've seen in a previous post in this series. Implementing and using chaining comes with its own problems.

But function composition offers a solution.

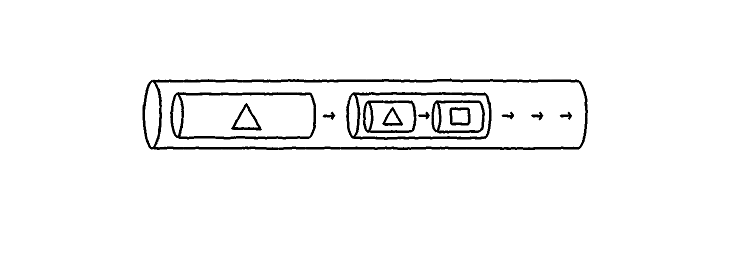

As a recap, we have a flow function that operates similar to the UNIX pipe, composing the argument functions. And

the map and filter functions are partially applied, i.e., they get the iteratee on the first call

and the collection itself on the second one.

The above example, rewritten to use functional composition (Try it):

const processedUsers = flow(

filter(byActiveAndPresent),

map(addActivityScore)

)(users);To move this to a reusable function, just extract the pipeline itself:

const processUsers = flow(

filter(byActiveAndPresent),

map(addActivityScore)

);This value is itself a valid pipeline, and can be part of a larger one:

const activityScores = flow(

processUsers,

map((user) => user.activity)

)(users);This way, arbitrarily deep pipelines are possible, without losing the benefits. Parts used in multiple places can be moved to a central location, promoting code reuse.

ImmutableJs's update

ImmutableJs provides a function called update that can be used to achieve the same effect. It gets the whole collection

and returns a new one (Try it):

const users = Immutable.List(...);

const processUsers = (users) => {

return users

.filter(byActiveAndPresent)

.map(addActivityScore);

};

const activityScores = users

.update(processUsers)

.map((user) => user.activity);With update, ImmutableJs collections are easy to extend with domain-specific steps.

Conclusion

Extending a collection pipeline with custom processing steps is an everyday task in complex apps. Doing it right promotes code reuse.

The most important step is to know the libraries. If they provide a way of adding custom steps, like ImmutableJs does, use that. If not, use functional composition instead of Array#Extras for any non-trivial processing.